As AI applications continue moving beyond simple chatbots, development teams are facing new challenges around orchestration, memory management, and multi-step reasoning. Frameworks such as LangChain and LangGraph have become central to this shift, especially for companies building AI copilots, autonomous workflows, and retrieval-based systems. While the two frameworks belong to the same ecosystem, they are designed for different levels of workflow complexity. Understanding the differences between LangChain and LangGraph is important for teams that want to build scalable, maintainable, and production-ready AI applications.

1. What is LangChain?

LangChain is an open-source framework designed to help developers build applications powered by large language models (LLMs). Instead of working directly with raw model APIs, LangChain provides a structured way to connect AI models with external tools, databases, memory systems, and retrieval pipelines. This makes it easier to develop applications such as AI chatbots, RAG systems, document assistants, and AI search tools.

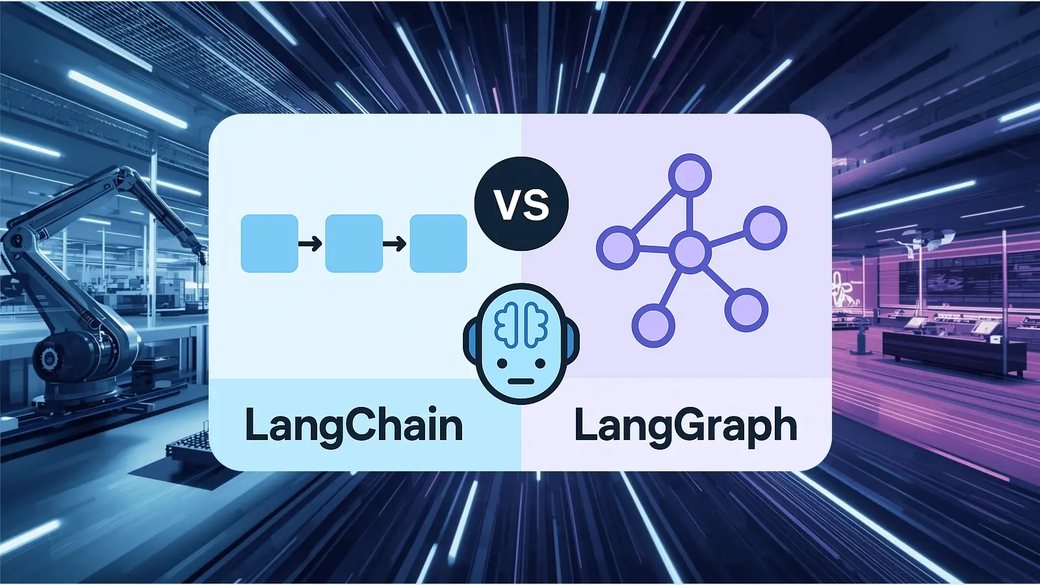

At its core, LangChain uses a chain-based workflow in which tasks are processed step by step in a relatively linear order. A typical process may involve receiving user input, retrieving additional context, calling external tools, and then generating a response: User input → Prompt → Retrieval or tool call → LLM response

Because much of the infrastructure already exists within the ecosystem, developers can prototype AI applications relatively quickly without building every component from scratch. LangChain is therefore commonly used for AI chatbots, internal knowledge assistants, document Q&A systems, and retrieval-augmented generation (RAG) applications.

2. What is LangGraph?

LangGraph is a framework developed by the LangChain team to support more advanced AI agent workflows. Unlike LangChain’s mostly sequential execution model, LangGraph uses a graph-based architecture where each node represents a task, agent, or decision point. This structure allows AI systems to handle retries, branching logic, multi-agent coordination, human approval steps, and long-running workflows more effectively.

In practice, LangGraph enables AI applications to behave more like workflow engines rather than simple chatbots. For example, a coding assistant may involve one agent researching documentation, another generating code, and another validating the output before a final review step. If an issue appears, the workflow can return to a previous stage instead of restarting from the beginning.

This flexibility makes LangGraph more suitable for enterprise AI systems where workflows are often dynamic and involve multiple tools, APIs, databases, and reasoning stages. As a result, LangGraph is commonly used for AI agents, coding copilots, enterprise automation, research assistants, and multi-agent systems. However, the added flexibility also increases architectural complexity, meaning teams need stronger monitoring, observability, and state management to maintain these workflows effectively.

3. Core differences between LangChain and LangGraph

Although both frameworks belong to the same ecosystem, their design priorities are different.

| Aspect | LangChain | LangGraph |

| Core architecture | Chain-based workflows | Graph-based workflows |

| Primary focus | AI applications and RAG | AI agents and orchestration |

| Workflow structure | Mostly sequential | Stateful and dynamic |

| Multi-agent support | Basic | Advanced |

| Memory handling | Moderate | Stronger state persistence |

| Human-in-the-loop | Manual implementation | Better workflow support |

| Complexity level | Easier to start | More advanced |

| Best for | Chatbots, search, RAG | Autonomous AI systems |

The biggest difference is not necessarily the features themselves, but the type of problems each framework is designed to solve:

- LangChain focuses on connecting language models with tools and external data sources in a relatively efficient way.

- LangGraph focuses on managing how AI systems make decisions across multiple stages and agents.

This distinction becomes clearer as workflow complexity increases. A simple RAG assistant may work perfectly with LangChain alone, while an AI workflow involving planning, validation, retries, and collaboration between multiple agents will often benefit from LangGraph’s orchestration model.

Source: auxiliobits.com

4. When should you use each framework?

Choosing between LangChain and LangGraph depends largely on workflow complexity and operational requirements rather than popularity alone. While both frameworks support modern AI applications, they are designed for different levels of orchestration and scalability.

| Scenario | LangChain | LangGraph |

| AI chatbots and RAG systems | Highly suitable | Possible but often unnecessary |

| MVP and rapid prototyping | Strong choice | More setup required |

| Workflow complexity | Better for predictable flows | Better for dynamic workflows |

| Multi-agent coordination | Limited | Strong support |

| Long-running execution | Less suitable | Better suited |

| Human approval workflows | Manual setup | More natural support |

| Infrastructure and maintenance | Easier to manage | Higher operational overhead |

| Enterprise AI automation | Moderate | Better for advanced systems |

Note: A common misconception is that LangGraph replaces LangChain entirely. In reality, many development teams use both frameworks together because they address different layers of the AI stack. In a typical architecture:

- LangChain handles retrieval, prompts, tools, and integrations

- LangGraph manages orchestration and workflow execution

For example, LangChain may retrieve enterprise documents from a vector database, while LangGraph determines which agent should process the information next and how the workflow should continue. This combination allows teams to maintain flexibility while still supporting more advanced orchestration patterns when needed.

5. Practical considerations before implementation

Before choosing between LangChain and LangGraph, teams should also consider several implementation challenges that commonly appear in production AI systems.

- Overengineering too early: Many teams immediately adopt complex multi-agent architectures even when a simple RAG pipeline could already solve the business problem. Starting with unnecessary orchestration layers can increase development and maintenance complexity significantly.

- Underestimating infrastructure costs: Advanced AI workflows often involve multiple tool calls, retries, and agent interactions. As workflows grow, token usage, latency, and infrastructure costs can increase much faster than expected.

- Limited observability and debugging: AI systems are more unpredictable than traditional applications. Without proper logging and monitoring for prompts, workflow states, and agent decisions, debugging production issues can become extremely difficult.

- Poor state management: Managing memory and context is another common challenge. Storing too much information increases token consumption, while insufficient context can reduce response quality and workflow continuity, especially in long-running AI workflows.

- Lack of human oversight: Fully autonomous execution may sound appealing, but many enterprise environments still require validation checkpoints and human approval stages. This is particularly important in industries such as healthcare, finance, and enterprise operations where accuracy and reliability are critical.

The choice between LangChain and LangGraph depends mainly on workflow complexity and application goals. LangChain is often enough for chatbots, RAG systems, and lightweight AI tools, while LangGraph is better suited for multi-agent workflows and long-running AI execution. In many cases, the most effective approach is combining both frameworks within the same AI architecture.

>>>If your business is exploring AI agents, RAG systems, or enterprise AI automation, working with an experienced development partner can help reduce implementation risks and accelerate deployment. At PowerGate Software, our team supports organizations in building scalable AI applications tailored to real business workflows, from AI-powered assistants to complex multi-agent systems.